Digital support for visual inspections using deep learning

DOI: 10.60048/exm20_36In accordance with IEC 60079-0, manufacturers of explosion-protected equipment are obligated to perform each routine test that is required in the 60079 series of standards. In addition, all necessary verification measures and inspections must be performed in order to ensure that the equipment corresponds to the documentation. [1]

Overall, comprehensive quality assurance measures are required in order to ensure that explosion-protected products are safe. However, these are typically costly in terms of time, finances and staff. Visual inspections, in particular, are currently performed by members of staff to a large extent. Unfortunately, their ability to identify errors varies from person to person and depending on their alertness.

The use of deep learning models offers huge potential for reducing the error rate in visual inspections. Smart object recognition enables product errors to be identified with consistent recognition accuracy. When it comes to traceability, too, the use of this technology offers many new opportunities. It enables data (image including estimate of object recognition algorithm) to be recorded during each visual inspection and assigned to the corresponding project.

Deep Learning

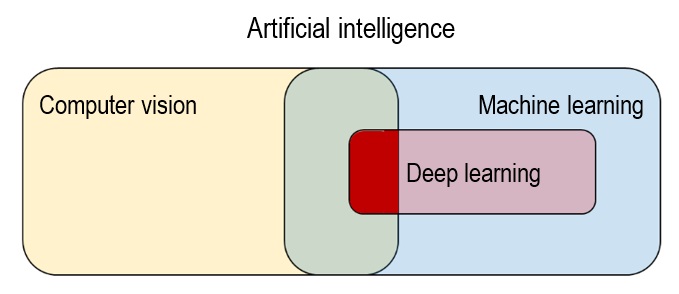

Deep learning is a field within machine learning; both of these disciplines fall within the scope of artificial intelligence, as shown in Figure 1. Machine learning refers to all systems that learn independently and without explicit instructions, and that adapt by using algorithms and statistical models to analyze data and draw conclusions from patterns.

Deep learning is present when in-depth neural networks are used for this purpose. When doing so, the type of data being processed is not defined. This means that texts, languages and images can be analyzed.

Processing visual data overlaps additionally with the field of computer vision, as shown in Figure 1 This includes all systems that collect visual data, process this data and use it to make decisions.

Both computer vision and machine learning are based on identifying "features". In terms of visual data, this can include corners, edges or more complex structures that are typical of the object to be detected.

By identifying and combining relevant features, objects can be assigned to specific classes. In traditional machine learning and computer vision systems, these features need to be manually extracted from the input data and selected, as depicted in Figure 2a. Manually defining the features is, however, a very time-consuming task that requires a great deal of expert knowledge. Furthermore, there is always a risk that scientists and engineers will make mistakes when selecting the relevant features. The algorithm's success at identifying objects, languages or other phenomena is highly dependent on the developers' skills.[2][3]

In contrast, deep learning integrates the process of learning features and classifying objects into a model by using in-depth, artificial, neural networks to extract relevant features from the input data, as shown in Figure 2b.

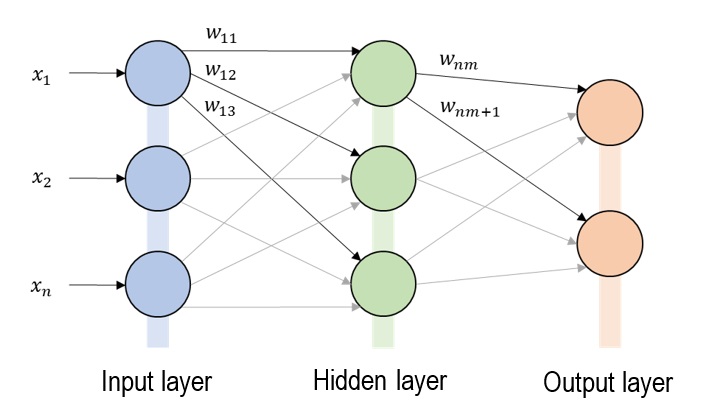

An artificial neural network is a computer model that recreates the neural structures of the human brain. Networks of this kind consist of a number of computing units ("neurons") that are connected to one another. In this system, each neuron receives a weighted total from all the neurons connected in front of it as an input value.

This value is then weighted and passed on to all following neurons, as shown in Figure 3.

The number and depth of the hidden layers can vary; when a high number of hidden layers are used, we refer to this as a deep neural network, and therefore deep learning. In order to identify objects using deep learning, features such as edges, corners, contours and object parts are abstracted from the training data, layer by layer, and entered into the classification layer. The training data contains information about the object classes, so that the model prediction can be compared to the specified object class. The prediction model can be adapted by changing the weights for as long as necessary until the prediction accuracy has been optimized. Human intervention is kept to a minimum at this stage, which significantly reduces the time spent working on the system. In addition, the models require less expert knowledge. In the end, deep learning offers a higher degree of flexibility, as the models can be retrained using a user-defined data set for each application.

Supporting routine tests using deep learning

In principle, when manufacturing explosion-protected equipment, it is essential that all parts produced correspond to the certified test sample. Harmonized standard IEC 60079-0 therefore specifies that all required verification measures and inspections must be performed in order to ensure that equipment corresponds to the documentation [1]. This conformity can be implemented easily in automated processes. However, if manual tasks are performed, correct production is highly dependent on the attentiveness of the employees involved. The use of automated monitoring method is a practical way to prevent potential product errors from being overlooked both during production and at the final quality check after production is complete (e.g. during the routine test). The production process is recorded using a camera and analyzed in real time by an object recognition algorithm based on deep learning, which means that information about production errors can be issued instantaneously. To do so, typical production errors can be integrated into the object recognition model.

The same process can be implemented for the final routine test. At this stage, too, typical product errors can be integrated into an object recognition model. The inspection of the sample can be performed by a mobile end device (e.g. smartphone with camera and integrated object recognition model) and on a permanently installed workplace equipped with cameras. The use of deep learning for visual workpiece inspections offers huge potential, especially for products with many different variants. As a rule, other visual monitoring methods require time-consuming manual feature engineering, which means that they are only rarely cost-effective when many variants are involved.

Application example

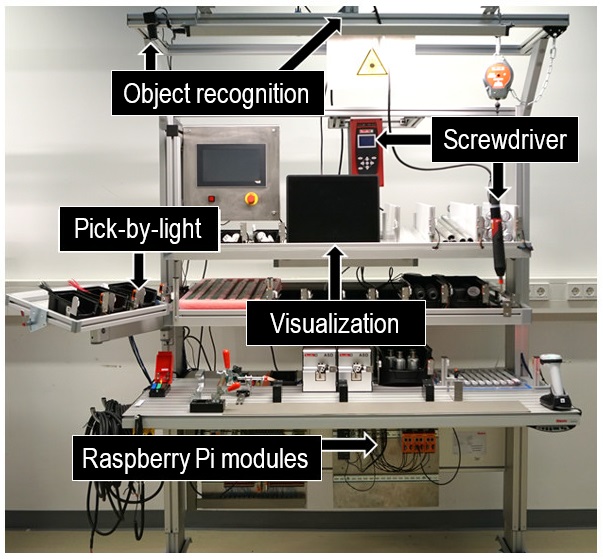

A real-life example of how this system is used can be seen in the "Smart Assembly" research project funded by the Carl Zeiss foundation; this project was performed at the Ernst Abbe University of Applied Sciences in Jena and examined different digital assistance systems in order to optimize manual mounting. One aspect of the project involved constructing a mounting workstation that uses an object recognition system based on deep learning to ensure that the assembly process runs correctly. The workplace is equipped with a digital assistance system made up of the following components: Object recognition module, pick-by-light module, screwdriver module and digital visualization module, as shown in Figure 4.

The individual modules are each linked to an industrial Raspberry Pi and communicate with each other using the MQTT network protocol. The current workpiece status identified by the object recognition module is used as key information. The status can be in the following states: Workpiece present, Workpiece not present, or Assembly defective. This information enables conclusions to be drawn about the current work step and a corresponding instruction to be displayed using the visualization module.

For instance, a warning can be displayed for the person performing the assembly work if a defective assembly is detected. The pick-by-light module and screwdriver module are activated depending on the current work step. In order to derive the work steps from the detected assembly parts, the key objects that should be present or not present are defined for each step. This approach guarantees flexibility for changing workflows.

The YOLOv4 model was used in the object recognition module because it is particularly suitable for achieving results in real time and extremely accurately [4]. In order to maximize the interference speed, the trained model is optimized using the TensorRT framework [5]. A total of 1512 training images containing 5485 object instances and 790 validation images containing 1468 object instances were used to train the model. The training data consisted of 41 individual object classes; each class was represented by an average of 134 training instances. Training was performed on an Nvidia GeForce 1650 Super GPU using the Darknet framework [6]. The mean average precision (mAP) indicates the prediction accuracy of the model; after 6 training periods, it reached a value of 99.81% ([email protected]). This shows that the model is able to predict all classes to a satisfactory level. Collecting the training and validation data, as well as annotating all object instances, took approximately 10 staff hours of work.

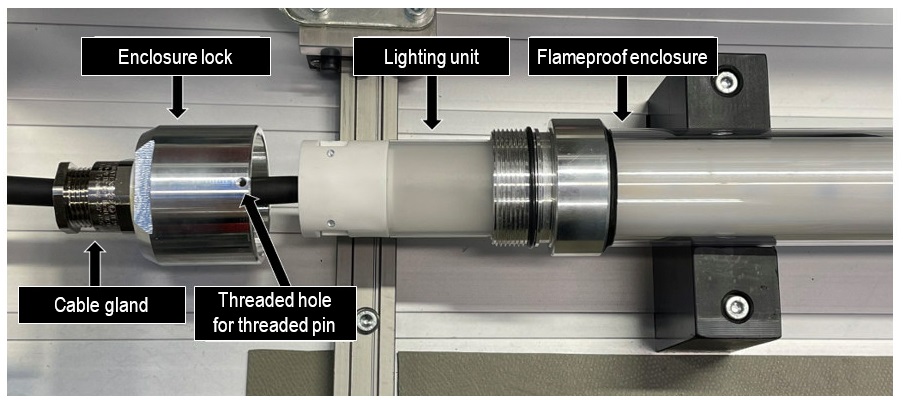

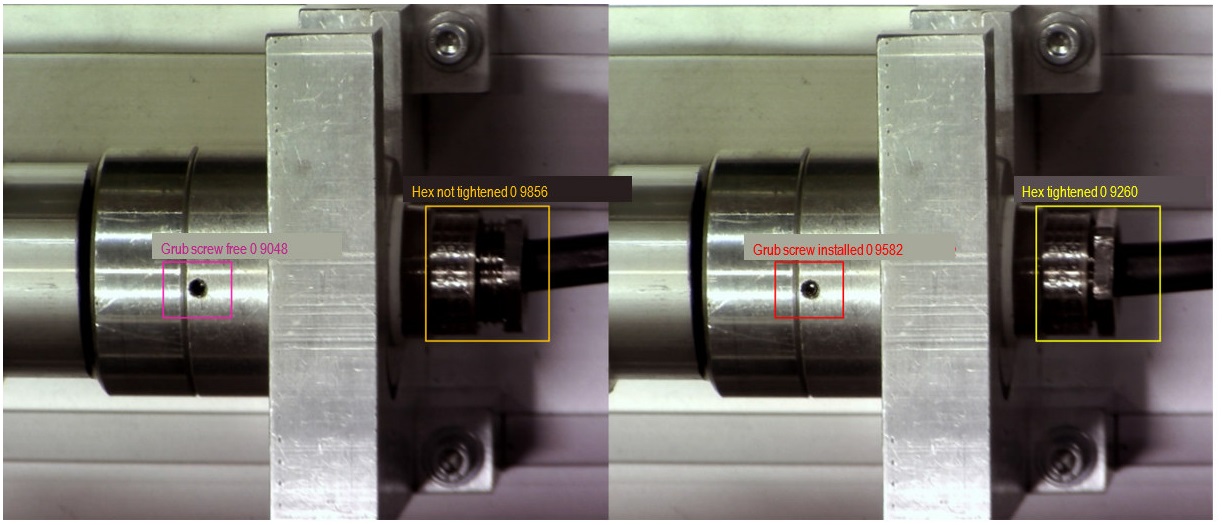

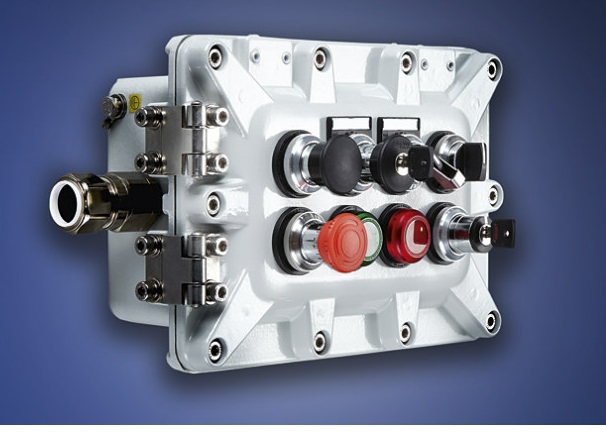

At the described workplace, a tubular light fitting in a flameproof enclosure from R. STAHL was installed. This consists of 36 component parts. In order to guarantee explosion protection, the lighting unit is inserted into a tube designed to be flameproof, which is closed using a sleeve with 7 thread turns, as shown in Figure 5. The threaded joint is designed in accordance with the requirements of IEC 60079-1 [7]. In addition, the sleeve features a cable gland. This is sealed around the cable because a defined torque is applied. Since, according to IEC 60079-0, parts that are required to maintain the respective type of protection must only be removable using a tool, the sleeve is also secured using a threaded pin. Correctly mounting of the threaded joint and correctly tightening the cable gland are particularly crucial to quality, since they are key to ensuring explosion protection.

The object recognition used detects both mounting steps reliably (see Figure 6) and only switches to the next mounting step when the mounting operation has been performed correctly. This ensures that the corresponding mounting steps are not skipped. In order to guarantee traceability, images and/or videos of critical mounting steps can be recorded and stored in a database.

This was not implemented in the current research project, but would be easy to integrate into the system.

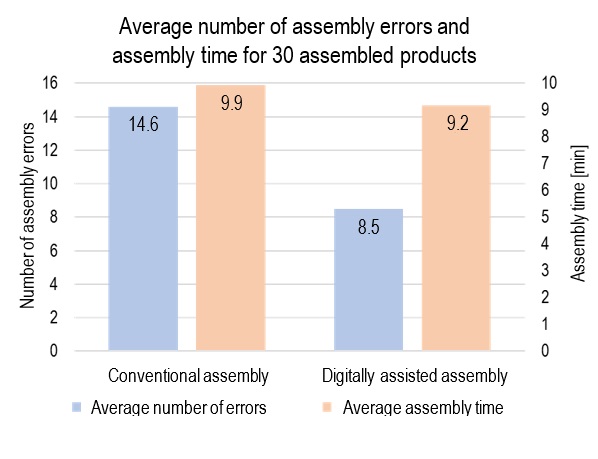

When it comes to the overall mounting of the tubular light fitting, the use of a digital assistance system was able to achieve a reduction in errors of 42% using object recognition, compared to a conventional workplace, as shown in Figure 7. To do so, 15 tests were performed per workplace, in which the test participants were instructed to assemble tubular light fittings for a period of 8 hours. Looking at the average assembly time, the use of object recognition does not lead to any apparent delays.

As the number of assembled tubular light fittings varied between test participants due to variations in their assembly speed, the first 30 assembled products were used as the basis for calculations.

Conclusion

On the whole, there is huge potential to optimize quality assurance for explosion-protected products through the use of deep learning. For products with a high number of variants, in particular, the flexibility of these systems mean that they represent a cost-effective and time-saving alternative to other computer vision methods, which require extensive manual feature engineering. However, since the process of acquiring training data and training the models takes some time, the use of deep learning is only cost-effective when products are to be produced in a sufficiently high quantity.

Since the systems considered here are only capable of checking visual features, they are not suitable as a replacement for comprehensive quality assurance measures. In addition, they give rise to questions regarding legal assessment in the event of a system error. For these reasons, deep learning should be seen as a means of complementing and supporting the work performed by staff.

References

[1] | DIN EN IEC 60079-0 September 2019. Explosive atmospheres - Part 0: Equipment - General requirements |

[2] | Wang, J.; Ma, Y.; Zhang, L.; Gao, R.X.: Deep learning for smart manufacturing: Methods and applications. In: Journal of Manufacturing Systems, Volume 48 (2018), S. 144-156 [Accessed on 15.02.2022] Available at: https://doi.org/10.1016/J.JMSY.2018.01.003 |

[3] | Mahony, NO.; Campbell, S.; Carvalho, A.; Harapanahalli, S.; Hernandez, G.; Krpalkova, L.; Riordan, D.; Walsh, J.: Deep Learning vs. Traditional Computer Vision. In: Computer Vision Conference 2019. Las Vegas, Nevada, United States. [Accessed on 15.02.2022] Available at: https://arxiv.org/ftp/arxiv/papers/1910/1910.13796.pdf |

[4] | Bochkovskiy, A.; Wang, C.-Y.; Liao, H.-Y. M.: YOLOv4: Optimal Speed and Accuracy of Object Detection, 2020 [Accessed on 02.02.2022]. Available at: arxiv.org/abs/2004.10934 |

[5] | NVIDIA TensorRT Documentation. NVIDIA. 2021 [Accessed on 03.02.2022] Available at: https://docs.nvidia.com/deeplearning/tensorrt/developer-guide/index.html |

[6] | J. Redmon: Darknet: Open Source Neural Networks in C. [Accessed on 02.02.2022]. Available at: pjreddie.com/darknet/ |

[7] | DIN EN 60079-1 April 2015. Explosive atmospheres – Part 1: Equipment protection by flameproof enclosures "d" |

More Article

Investigation of hydrogen generation in subsea umbilicals

In a growing number of cases, a build-up of hydrogen has been detected in the junction boxes located above the surface of the water, where…

Ex in sight

![[Translate to Englisch:] [Translate to Englisch:]](/fileadmin/user_upload/magazin/artikel/20_27_Ex_im_Blick/1_Ex_im_Blick_Teaser.jpg)

Incorrect storage of hazardous chemical substances and inadequate surveillance of storage areas all too often result in catastrophic…

The Need for Integrated Project Management

Reducing project management and development time alone has the potential to deliver 15 to 30 percent in cost savings

Static and dynamic material stresses acting on Ex "d" enclosures

Flameproof enclosures must be subjected to certain testing, including of their ability to withstand pressure

PLP NZ celebrating 45 years with R. STAHL

45 years ago, three things came together, R. STAHL, PLP (Electropar Ltd), and the willingness to adopt innovative new hazardous area…

Emergency lighting

Central battery systems as emergency lighting systems offer secure protection in the event of a power supply failure

Sensing nonsense: When appearances are deceptive

Process engineering systems are generally controlled by measuring process variables such as temperature, pressure, quantity, fill level or…

Digital support for visual inspections using deep learning

The use of deep learning models offers huge potential for reducing the error rate in visual inspections. Smart object recognition enables…

Lightning and surge protection in intrinsically safe measuring…

According to Directive 1999/92/EC [1], the user or employer are obliged to assess the explosion hazard posed by their system and they must…

Ex assemblies, Part 1

The discussion about Ex assemblies is as old as the EU ATEX Directive, and now dates back almost 20 years

How R. STAHL TRANBERG is Meeting the Digitalization Demands of…

Digitalization and the integration of data and solutions is playing a pivotal role in the shipping and maritime industries today, having a…

The "PTB Ex proficiency testing scheme"

The "PTB Ex proficiency testing scheme" (PTB Ex PTS) is a project that involves developing interlaboratory comparison programmes to assess…

Non-electrical explosion protection

Manufacturers and users must acquire knowledge on the subject of non-electrical explosion protection, in order to assess the application,…

Certification in South Africa

Certification in South Africa has certain key differences from international certification, e.g. IECEx or ATEX

Global conformity assessment using the IECEx system

![[Translate to Englisch:] [Translate to Englisch:]](/fileadmin/_processed_/c/e/csm_Einstiegsbild_IECEx_Paragraf_38414b50d0.jpg)

IEC Technical Committee (TC) 31, tasked with developing a global conformity assessment system for explosion-protected products

A mine of experience in industry

![[Translate to Englisch:] [Translate to Englisch:]](/fileadmin/_processed_/f/f/csm_Einstiegsbild_e_tech_IEC_6270d7c66a.jpg)

He takes over from Thorsten Arnhold, who chaired the System for the past six years

Conformity assessment in the USA

In contrast to the international IEC/IECEx community and the European Union, the conformity assessment landscape in the USA is very…

25 Years of the Zone System in the USA

In the area of explosion protection, the publication of Article 505 in the 1996 National Electrical Code (NEC®) was seen as a giant step…

An Ex-citing future with hydrogen

![[Translate to Englisch:] [Translate to Englisch:]](/fileadmin/_processed_/0/1/csm_shutterstock_1644506059_7d60f29da8.jpg)

Aside from a few exceptions based on the effect of gravitation and radioactivity, hydrogen is the source of most primary energy that makes…

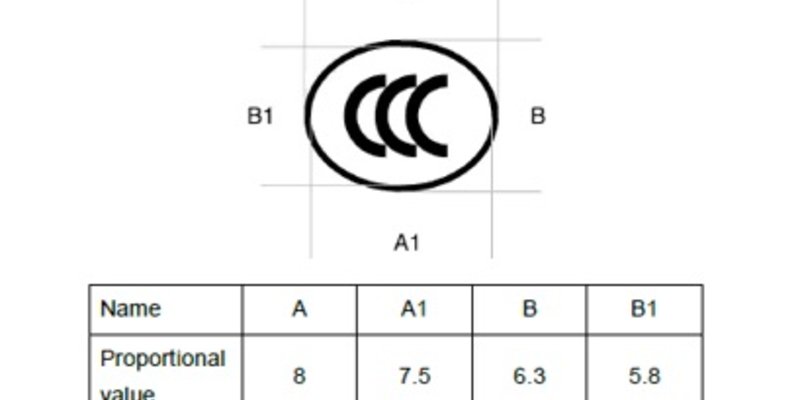

Certification of Ex products

Since 1st October 2019, new CCC certification rules have been in place for Ex products sold in China

![Figure 2: a) Traditional computer vision and machine learning sequence b) Deep learning sequence (according to [2])](/fileadmin/user_upload/magazin/artikel/20_36_Digitale_Unterst%C3%BCtzung_der_Sichtpr%C3%BCfung_mittels_Deep_Learning/Bild_2_EN.jpg)